Sui generis

2024

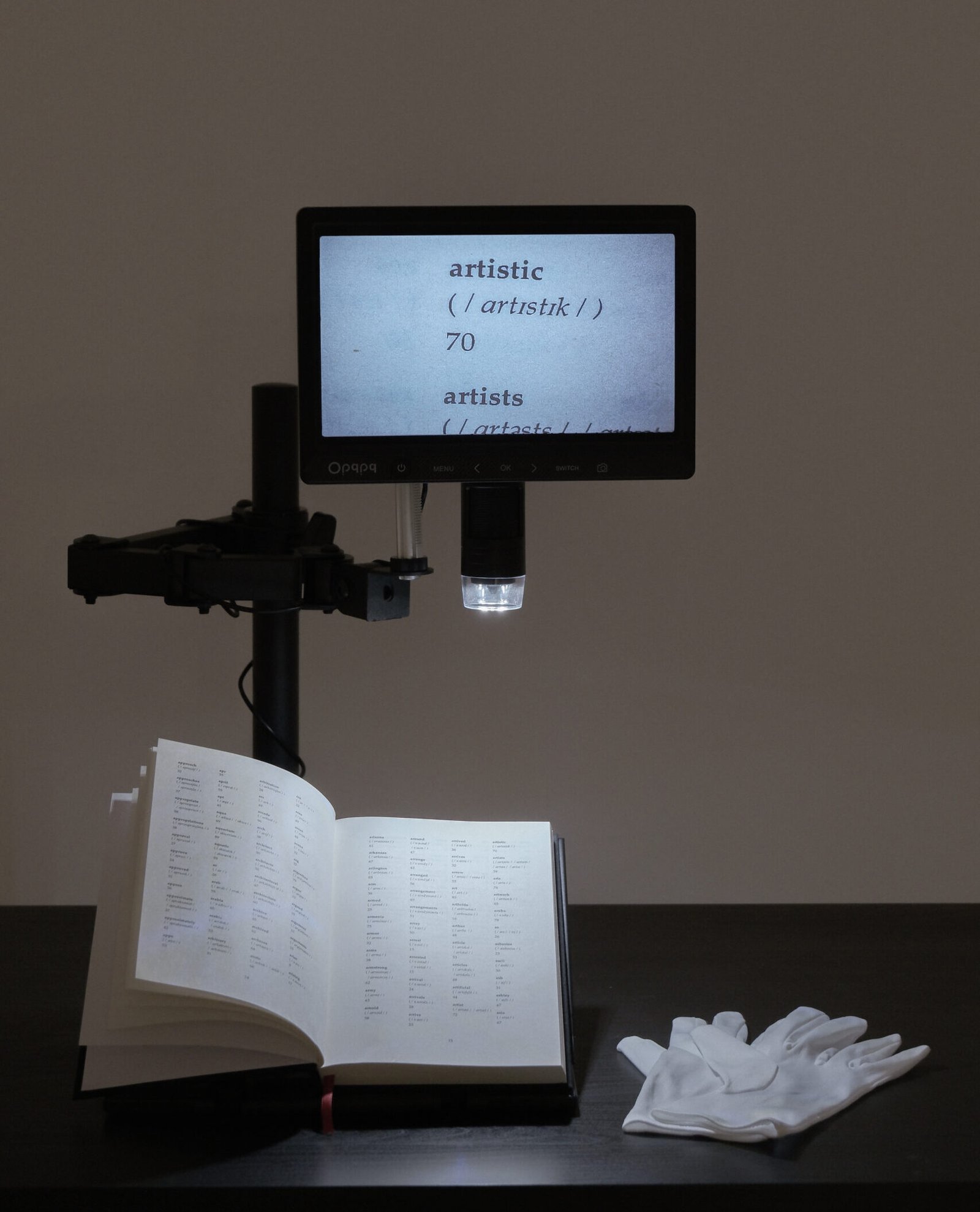

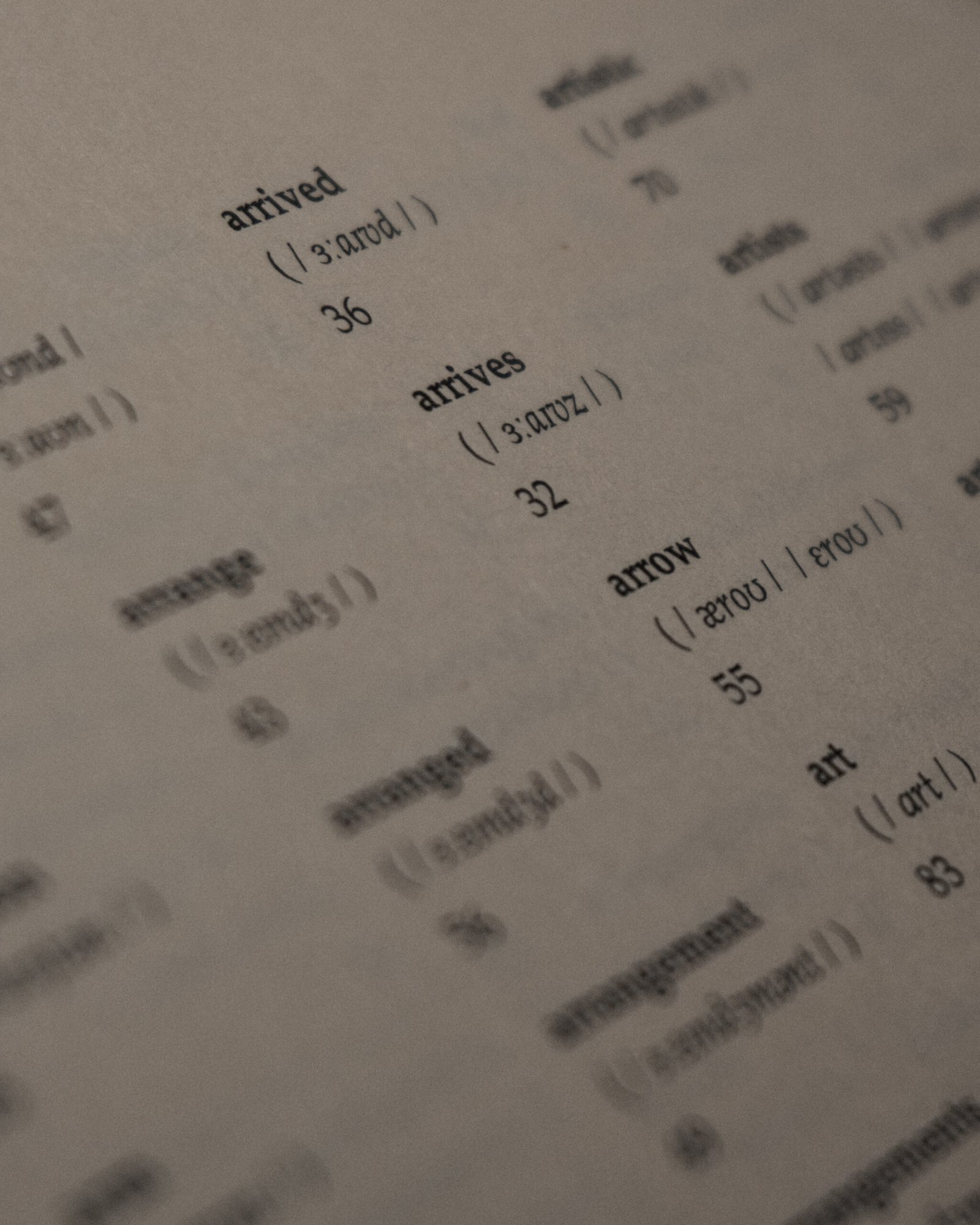

Sui generis investigates the ideas of homophily, correlation and bias in machine learning models, as well the statistical projections they produce. The artefact that resulted from this initial study is a “personalised” dictionary. In this dictionary each word receives, instead of a meaning, a classification between 0 and 100 predicted by a machine learning model that the artist trained on her own preferences.

Sui generis

2024

TECHNICAL INFORMATION

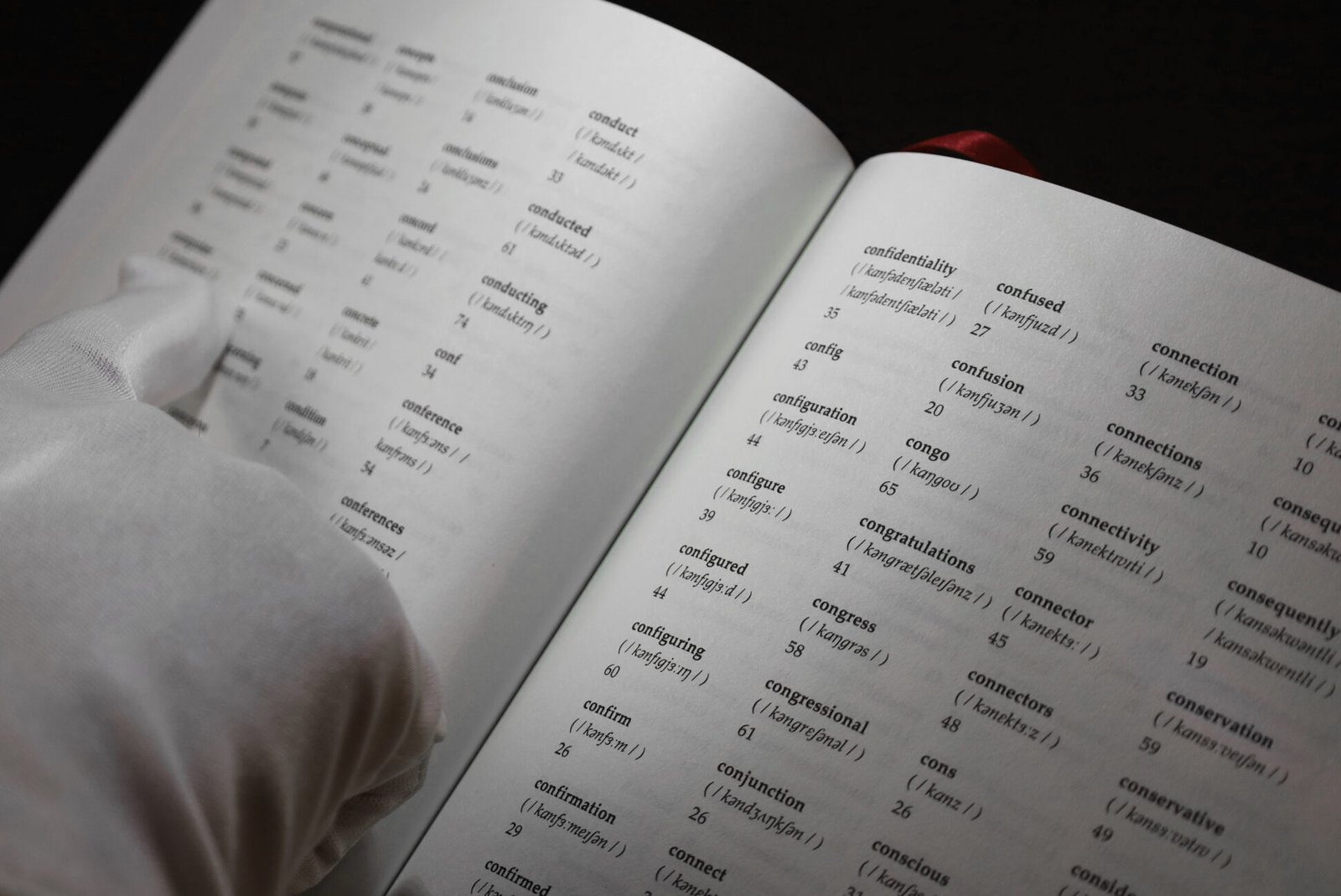

Printed publication (dictionary), digital microscope, white gloves, table and stool, custom machine learning model

SELECTED EXHIBITIONS

Prémio A Arte Chegou ao Colombo, Museu Nacional de Arte Contemporânea, Lisboa, PT [2025]

Mostra Nacional de Jovens Criadores, Museu de Lamas, Santa Maria da Feira, PT [2024]

Corrente de Ar Volume IV, Beato Innovation District, Lisboa, PT [2024]

Sui generis exposes how machine learning reduces human complexity to statistical abstractions. Because these systems are built to decide, and decisions are always biased, I approach machine learning algorithms as ‘judgement machines’. The work materialises this position through an algorithm that infers (or perhaps, fails to infer) how much I like or dislike a given word on a scale from 0 to 100.

I constructed a small dataset of words and assigned each of them a numerical preference on that same scale (an intentionally absurd task). This dataset was used to fine-tune a pre-trained language model, prompting it to output percentages when given a word. While the data appears personal, the original model already carries vast, pre-existing associations; my training merely refines its behaviour, embedding inherited bias into the appearance of individual preference.

The resulting classifications are materialised as a dictionary. As a symbol of universal knowledge, it instead reveals the impossibility of true personalisation: each number exposes the model’s limits. Displayed within a clinical setting, visitors examine the book using white gloves and a digital microscope, entering a loop of scrutiny where subjective data is objectified, only to become subjective again through the viewer’s judgement.

The algorithm ultimately reflects countless unseen contributors embedded within its training data. Where do other people’s preferences surface in these supposed personal classifications, and how does this homogenised entity shape my own sense of identity?